by LDeX Group | Apr 15, 2019 | Blog, Group News, Press

It’s been a busy few months at LDeX as we’ve worked to position the business to support our customers’ future requirements. Access to the services of the hyper cloud vendors – Microsoft Azure, AWS and Google Cloud – is becoming a key part of any digital strategy and we want to ensure that our customers can access those services so they can innovate and grow.

As a result LDeX has become part of iomart Group plc, one of the country’s leading providers of managed cloud services and a company that is listed on the Alternative Investment Market of the London Stock Exchange (IOM: AIM). This is an important step forward for us. As part of a much larger organisation LDeX has the financial strength, stability and wider expertise to support our customers in the years ahead.

LDeX is committed to delivering the level of service that our customers have come to value. Our commitment to reliability, resourcefulness and personability remains and is strengthened because of the ability that iomart gives us to offer a much wider portfolio of cloud services.

As Mark Sedgley, Group Sales Manager for LDeX, explains: “We’ve built a reputation as one of the most dependable providers of carrier neutral, premium colocation space in both London and Manchester. Our goal is to offer our clients the ultimate hosting environment for their colocation deployments, underpinned by an impressive portfolio of connectivity services.

“Joining the iomart Group has come at an ideal time in our journey and will enable us to offer a complementary product set to our customers. We very much look forward to working with our iomart colleagues and are excited about what the future holds.”

We have today changed our company branding to reflect this.

If you would like to know more about our parent company iomart please visit www.iomart.com

-ends-

by LDeX Group | Mar 14, 2018 | Blog, Press

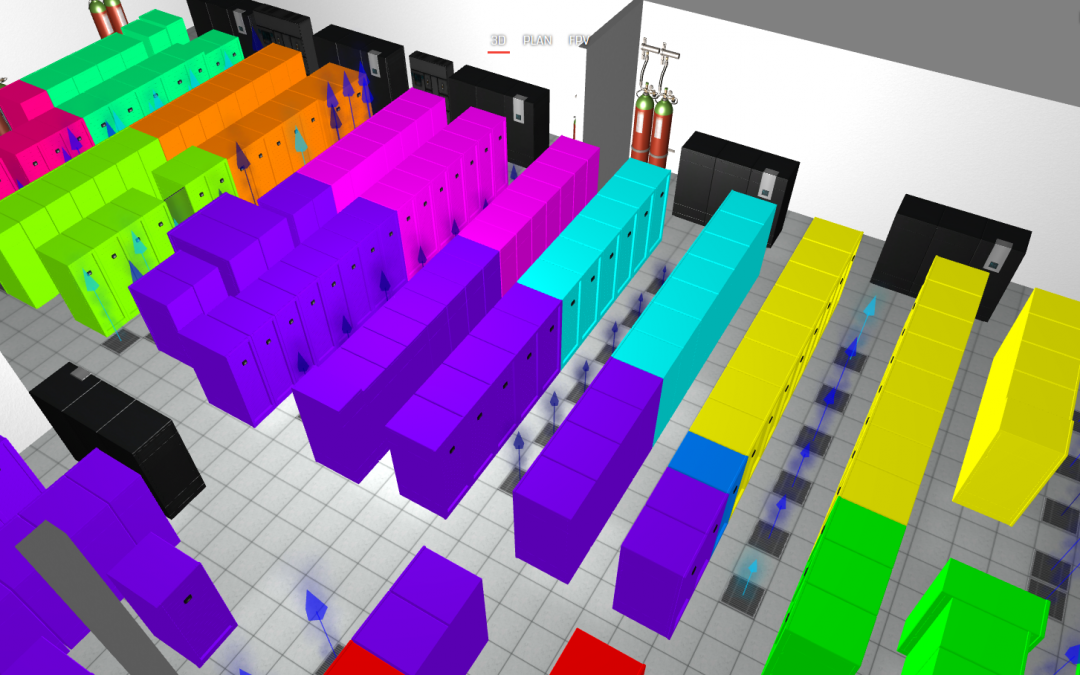

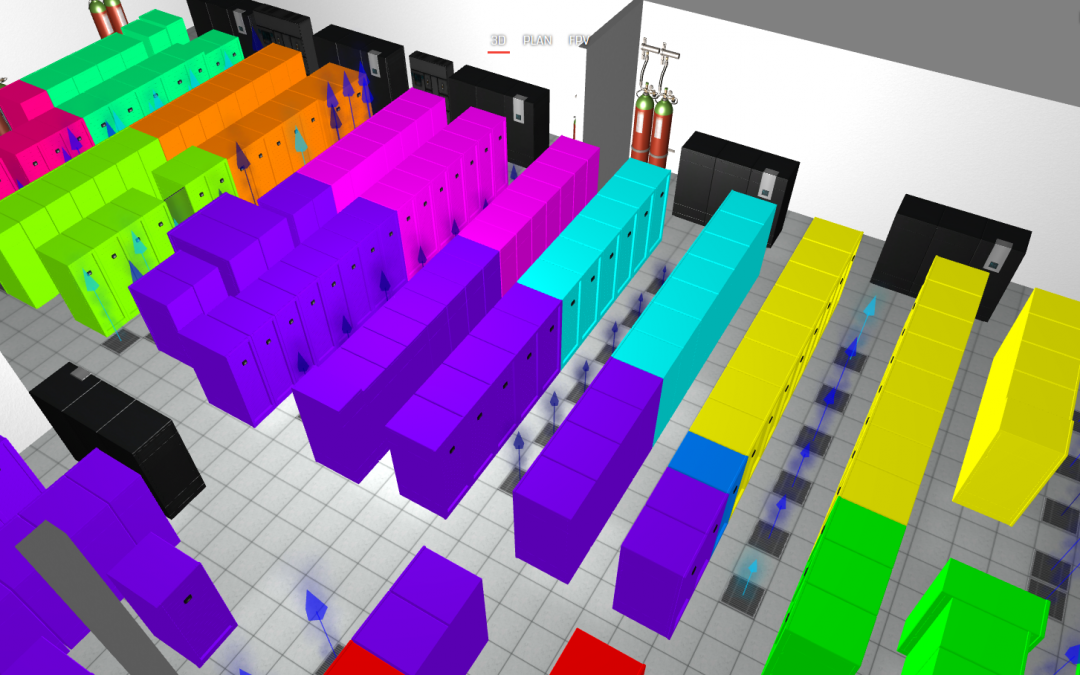

UK-based data centre thermal software and services specialist firm EkkoSense will demonstrate its innovative software-driven thermal optimisation solutions for critical facilities on stand D1210 at Data Centre World 2018 at Excel, London March 21-22.

Unlike traditional critical cooling approaches, EkkoSense harnesses the potential of the ‘fully-sensed’ data centre to enable entirely new levels of thermal compliance and cooling energy cost reduction, and capacity optimisation. At DCW18 EkkoSense will demonstrate a series of sensor, software and operational innovations that will confirm the company as a leader in the provision of thermal optimisation solutions for critical live environments. Key announcements will include:

- The world’s first Internet-of-Things (IoT) enabled wireless sensor to provide a local display of measured temperature and relative humidity values. The EkkoSensor wireless display sensor can show temperature profiles over the last hour, 24 hours or week for immediate on-site thermal assessment, and integrates with EkkoSoft Critical 3D software to provide real-time virtual reality visualisation.

- Real-time tracking of cooling loads with EkkoAir – EkkoAir is the world’s first thermal monitoring solution to track data centre cooling loads in real-time. When combined with EkkoSoft critical thermal modelling, monitoring and visualisation software, EkkoAir provides data centre operators with an intuitive, holistic 3D real-time view of cooling performance across their entire data centre estate – enabling them to reduce thermal risk and save between 20 and 30% of their overall data centre cooling costs.

- The combination of low-cost wireless IoT sensors and powerful spatial software enable the creation of rack-level detailed maps of a data centre’s real-time cooling and thermal performance. Taking advantage of this network of IoT sensors, EkkoSense will be demonstrating its new ‘Zone of Influence’ functionality at DCW18. By associating specific cooling devices with racks in dedicated ‘Zones of Influence’, EkkoSense is now able to combine its real-time cooling and live airflow data to enable zone-by-zone cooling optimisation that’s far more cost-effective than traditional sensors and control hardware.

Enabling real-time decision-making with EkkoSense

By collecting thermal data from potentially thousands of wireless sensors, EkkoSense also collects the core machine learning/AI data that is necessary to power next generation real-time decision-making based on proven space, cooling and power algorithms. Available at a cost equivalent to less than 20% that of a traditional data centre cooling unit, the EkkoSense software-driven thermal optimisation approach also provides a platform for the kind of real-time decision-making and scenario planning capabilities that organisations will need to transition towards true AI-managed data centres.

by LDeX Group | Mar 14, 2018 | Blog, Press

More data centre professionals are taking the opportunity to turn their data centre experience into a master’s degree with the world’s only master’s degree in Data Centre Leadership and Management, delivered by CNet Training, an Associate College of Anglia Ruskin University. Since launching in 2015, impressive numbers have been recorded and are continuing to steadily increase.

The master’s degree in Data Centre Leadership and Management is a three-year programme delivered through online distance learning, fully supported by a team of sector experts, who are available through online or telephone communication.

The distance learning delivery of the programme means that learners can study at times that are convenient to them, yet they can still easily communicate with their tutors and each other, wherever they are in the world. There is also a chance to participate in a three day ‘bootcamp’, held each year in the historic city of Cambridge – this is an optional activity and a great opportunity to get together with the tutors and fellow learners, make contacts and have fun.

The master’s degree is a level seven programme which sits at the top of The Global Digital Infrastructure Education Framework. It is designed in collaboration with the industry and the content is constantly refreshed to ensure it reflects the very latest needs of the industry.

It’s a unique programme – at present, no other programme offers data centre professionals this high-level leadership and management education specifically tailored to the data centre sector.

The Global Digital Infrastructure Education Framework spans from qualification level three, all of the way up to level seven and is constantly expanding with new programmes. Each programme has been designed to address the skills and knowledge requirements of those working in different areas of the digital infrastructure industry. All programmes flow perfectly from one to another, yet they are of equal value when standing alone.

On successful completion, learners receive a prestigious master’s degree in Data Centre Leadership and Management which provides an impressive MA post nominal title at a full graduation ceremony in Cambridge. This October will be another world first for CNet when the first co-hort from 2015 graduate to become the first ever to hold the world’s only master’s degree in Data Centre Leadership and Management. They will be the first group of data centre professionals throughout the world to hold this title, setting themselves apart from the rest.

Andrew Stevens, CNet’s president and CEO comments, “I am delighted by the popularity of the master’s degree in Data Centre Leadership and Management. It’s still going from strength to strength and I predict it to become even more popular in the future. I’m really looking forward to the first graduation ceremony this October, it’s going to be a significant part of not only CNet’s history, but also for those who are graduating. The degree is creating an elite group of data centre professionals which shows the commitment and hard work the learners have put in and they are being rewarded with the world’s only master’s degree in Data Centre Leadership and Management.”

Registration is now open for the next programme which will commence on September 17, 2018.

by LDeX Group | Mar 12, 2018 | Blog, Press

According to the International Data Corporation (IDC) Worldwide Quarterly Server Tracker, vendor revenue in the worldwide server market increased 26.4% year over year to $20.7 billion in the fourth quarter of 2017 (4Q17).

The server market continues to gain momentum, as traction for newer Purley and EPYC-based offerings grows.

While demand from cloud service providers has propped up overall market performance, other areas of the server market continue to show growth now as well.

Worldwide server shipments increased 10.8% year over year to 2.84 million units in 4Q17.

Volume server revenue increased by 21.9% to $15.8 billion, while midrange server revenue grew 48.5% to $1.9 billion.

High-end systems grew 41.1% to $2.9 billion, driven by IBM’s z14 launch last quarter.

IDC expects continued long-term secular declines in high-end system revenue, with short periods of growth related to major platform refreshes.

IDC senior research analyst Sanjay Medvitz says, “Hyperscalers remained a central driver of volume demand in the fourth quarter with leaders such as Amazon, Facebook, and Google continuing their data center expansions and updates.

“ODMs continue to be the primary beneficiaries from hyperscale server demand, some OEMs are also finding growth in this area, but the competitive dynamic of this market has also driven many OEMs such as HPE to focus on the enterprise.”

“For example, HPE/New H3C Group grew 38.6% and 114.6% in High-End and Midrange Enterprise Servers, respectively. Other highlights in the quarter include robust growth from Dell Inc., which continues to capitalize on expanded opportunities from its merger with EMC, and IBM, which experienced another successful quarter from its refreshed system z business.”

HPE/New H3C Group and Dell were statistically tied for first in the worldwide server market with 18.4%, and 17.5% market shares respectively in 4Q17.

HPE/New H3C Group revenue increased 10.1% year over year to $3.8 billion, while Dell Inc. increased 39.9% year over year to $3.6 billion.

HPE’s share and year-over-year growth rate include revenues from the H3C joint venture in China that began in May of 2016, thus, the reported HPE/New H3C Group combines server revenue for both companies globally.

IBM captured the third market position at 13.0% share with revenue growing 50.3% year over year to $2.7 billion.

Lenovo and Cisco were statistically tied for the fourth position.

Lenovo had 5.3% share, with revenue increasing 15.1% to $1.1 billion and Cisco had 5.1% share with revenue increasing 14.8% to $1.1 billion.

The ODM Direct group of vendors grew revenue by 48.1% to $4.2 billion. Dell Inc. led the server market in terms of unit share at 20.5%.

by LDeX Group | Mar 12, 2018 | Blog, Press

Hyperscale operator capex boomed in the last quarter of 2017, witnessing a 19% growth over 2016.

This comes from new data from Synergy Research Group which shows that the capex of hyperscale operators totalled $22 billion in the quarter and reached almost $75 billion for the full year.

Much of that hyperscale capex goes towards building and expanding huge data centers, which have now grown in number to 400.

The top five spenders are Google, Microsoft, Amazon, Apple and Facebook, which in aggregate account for over 70% of Q4 hyperscale capex.

On average during 2017, the top five of Google, Amazon, Microsoft, Apple and Facebook spent well over $13 billion per quarter combined.

The report also puts an emphasis on Amazon and Facebook as having particularly strong capex growth in 2017.

Beyond the top five, the report names the hyperscale market’s other major spenders as Alibaba, IBM, Oracle, SAP and Tencent.

Among these five, Alibaba capex more than doubled in 2017, while growth at both Oracle and SAP was also above average.

Other notables outside of the top ten include Baidu, eBay, JD.com, NTT, PayPal, Salesforce, Yahoo Japan and Yahoo/Oath.

Across all hyperscale operators, 2017 capex equated to just over 7% of total revenues, although the ratio varies greatly by company, from a low of 2% to a high of 17% depending on the nature of the business.

Synergy Research Group chief analyst and research director John Dinsdale says over the last four years the firm has seen many companies try and fail to compete with the leading cloud providers.

“The capex analysis emphasizes the biggest reason why those cloud providers are so difficult to challenge.”

“Can you afford to pump at least a billion dollars a quarter into your data center capex budget? If you can’t, then your ability to meaningfully compete with the market leaders is severely limited.”

“Of course, factors other than capex are at play, but the basic financial table stakes are enormous.”

by LDeX Group | Mar 12, 2018 | Blog, Press

In the data center industry, the only thing that’s certain is change.

And this change, like much of the world, is being driven by data. According to IDC, the global datasphere will grow to a whopping 163 zettabytes by 2025. There are a number of ways of putting this into perspective.

My favourite analogy to describe the vastness of our future data production is this one:

If each Petabyte in a Zettabyte were a centimetre,

then we could reach a height 12 times higher than the Burj Khalifa

(the world’s tallest building at 828 meters high).

With more data, from more sources, anticipated over the coming years – the heat is on for operators and businesses to “handle” the growing data deluge as effectively and safely as possible.

In a bid to drive competitive edge, gain control of their costs, and remove themselves from the ever-growing task of data storage, we’re increasingly seeing large enterprises and medium-sized businesses move away from on-premise data centres and have increased reliance on third-party providers.

This is a trend we can expect to see grow throughout the year for a number of reasons, such as:

Using expert cloud providers enables innovation on a new level

For many, this seems to be the primary driver.

As John Nichols, director of enterprise architecture at California utility PG&E pointed out:

“Things may appear similar to legacy data center technologies, but the cloud operates in exponential terms. It is radically different.”

Indeed, cloud services today enable IT departments to do a lot more than simply store data efficiently.

More data means more strain on the power grid

Most of us understand that data centers consume massive amounts of power.

The good news here is that rather than use more and more power (and create more harmful emissions) to run on-premise data centers, enterprises are shifting workloads into third-party facilities that are investing heavily into green power and next-generation cooling technologies.

Data center technologies are evolving rapidly

Cooling technologies aren’t the only aspect of data center operations that are changing.

In fact, AI and machine learning are quickly becoming a requirement in order to compute larger amounts of data and make faster, smarter decisions.

Rather than try to keep up with the rapid pace of innovation (and the capital investment that goes along with it), enterprises are choosing to rely on companies that specialize exclusively in data center operations.

Security is a top concern

For many, the perceived risk of data loss or damage is becoming too much to manage.

At this point, everyone has seen the damage that incidents like data breaches and downtime can cause a business.

As cyber attacks are getting more sophisticated and common, and data is becoming more abundant and precious, many organizations see this as reason enough to colocate in a third party facility with a reputation for security and reliability.

Data centers can address compliance

Last, but certainly not least, data centers can help users get to grips with compliance, often having dedicated and comprehensive compliance programs to address individual needs.

With compliance regularly evolving, data centers have fast become a reliable and safe way to meet government and trade body regulations, rather than businesses needing to commit a dedicated resource to the task.

by LDeX Group | Mar 12, 2018 | Blog, Press

There is a lot of murkiness around cloud solutions and data centres because the distinctions aren’t always made clear – Paessler has provided it here in black & white.

The main difference between a data centre and the cloud is that in-house IT departments are usually responsible for the hardware and networking when running a data centre; with the cloud, you pay another company to do that for you. That doesn’t mean the responsibility of those cloud services is outsourced though. The in-house IT team still has to monitor system availability and meet their SLAs.

Data centres are generally more attractive to companies with sensitive information. Such companies may even have a company policy that prohibits third party access to their data. Cloud services, on the other hand, offer flexibility and scalability: You only pay for what you use.

Going Cloud

“Going Cloud” is not simply moving Virtual Machines to AWS, Azure or Google, or getting rid of the servers. It’s the chance to rethink processes, to upgrade, and to streamline.

Before moving such services to the cloud, you can, as with every network disaster plan, ask yourself “what can I do to mitigate some of the possible risks, and at what price?” Since there’s a direct correlation between investment and availability, you can use this formula to help assess the risk:

(costs < (loss in revenue X probability of occurrence))

The Hybrid

It doesn’t have to be one or the other. Companies might choose to host less sensitive data in the cloud and critical information in a data centre, or implement a Hybrid Cloud approach combining on-premise, private cloud and third-party services, with orchestration between the platforms. Allowing workloads to move between private and public clouds, as computing needs and costs change, gives businesses more data deployment options and greater flexibility.

Supporting the transition

Paessler’s vision is to ensure computers and networks (the foundation of modern society) work reliably at all times. Paessler understands that cloud-based computing opens new paradigms for development, usage, management and IT professionals. Our mission is to support IT professionals during this shift, helping them to maintain network uptime. Nothing should impact the network, regardless of where it sits: data centre or cloud.

PRTG Network Monitor is a unified network monitoring solution which can monitor your data centre, and your cloud services. The IT administrator gets a global view of their IT estate so they can intervene and take control where necessary. Even if IT infrastructure and services are hosted elsewhere, it doesn’t mean the responsibility is.

by LDeX Group | Mar 12, 2018 | Blog, Press

5G has been talked about for a number of years but the quest is really starting to heat up with actual implementations on the near horizon – which means a lot more data.

This week at Barcelona’s Mobile World Congress Ericsson declared 5G ‘open for business’ while several other companies have been keen to express their handiwork too.

One of these is Huawei, with the company announcing an agreement with BT Group to extend their strategic partnership with a clear focus on ensuring 5G leadership for BT Group and its mobile network, EE.

The partnership involves development and live trials of 5G New Radio (NR), core network technology, and 5G customer premises equipment (CPEs). The aim is to test real-life 5G performance in a range of environments in preparation for commercial launch.

“Our 5G research has been hugely promising, and this partnership with Huawei will turn that research into reality,” says BT Group CTIO Howard Watson.

“Huawei has helped us drive the evolution of the EE 4G network, and they are the ideal partner to help us push the barriers of 5G.”

BT and Huawei started joint work on 5G research and development in 2016 and have a wide ranging research collaboration agreement. In November 2017, they announced the completion of the UK’s first 5G end-to-end lab testing, delivering consistent 2.8Gbps downlink throughput and sub-5ms latency.

Huawei 5G product line president Yang Chaobin says BT is one of Huawei’s most important global customers.

“Signing this new agreement is recognition of our leading position in the 5G field. We are confident that this further deepening of our partnership will show that our end-to-end 5G solution – from network to device – leads the industry,” says Chaobin.

“This partnership demonstrates our ability to deliver and support the successful deployment of a 5G commercial network to our customers.”

Meanwhile, Ericsson and MTS have announced an agreement to establish a 5G research centre in Innopolis, a new Russian high-tech city located in the Republic of Tatarstan.

The two companies will leverage their expertise, latest technologies, and partner ecosystem to build prototypes and explore new business opportunities with 5G and Internet of Things for Smart Cities, with possible extension to other areas.

Their activities will begin in the second quarter of this year and in addition to joint research and development projects, Ericsson and MTS plan to organise hackathons and collaborate with local partners, startups, and residents. The idea is to develop solutions that can be deployed and evaluated locally before being scaled for the global market.

“We are conducting research both in-house and in partnership with key suppliers, continuously testing new solutions. Today, we reached a new stage of our technological cooperation with Ericsson,” says MTS procurement and administration vice president Valery Shorzhin.

“The launch of a joint R&D Center will significantly accelerate the introduction of innovative products to the market. We hope that the research work of both companies will allow us to bring new developments not only to the Russian but also to the global market.”

The infrastructure to be used at the research center includes the Ericsson IoT Accelerator Platform and Massive IoT solutions supporting NB-IoT.

“Ericsson will provide its latest 5G and IoT technologies, global expertise and access to a worldwide ecosystem of partners and universities as well as support of key Business Labs,” says Ericsson Russia president Sebastian Tolstoy.

“The R&D Center in Innopolis will become an important part of Ericsson’s global R&D program for development and launch of IoT innovations to drive the large-scale uptake of IoT in Russia and beyond.”

by LDeX Group | Mar 12, 2018 | Blog, Press

Two Universities have become the first UK academic organisations to deploy IBM’s POWER9 system.

Queen Mary University of London (QMUL) and Newcastle University are now benefiting from the system for modern high-performance computing (HPC), analytics and artificial intelligence (AI) workloads.

The Universities recently received delivery of the systems and they will be integrated by OCF into existing HPC infrastructures.

According to QMUL professor Sean Gong, QMUL needs the latest technology in order to enable its researchers to excel in areas where they are using deep learning to solve some of today’s toughest scientific challenges.

The University became one of the first organisations to purchase two IBM Accelerated Compute Servers (AC922) powered by POWER9 CPUs, Volta GPUs and NVLink 2.0 interconnects.

“QMUL is a world-leader in deep learning research in post-event video forensics and analysis. Deep learning by large-scale convolutional neural networks – a category of neural networks that has been proven effective in areas such as image recognition and classification – has radically changed research into computer vision in recent years,” says Gong.

“Given our previous test trials on IBM Minsky POWER8 servers, we expect to see significant benefit from the new POWER9 servers for deep learning on big video data.”

Newcastle University co-director of the joint quantum centre Carlo Barenghi says researchers within the mathematics, statistics and physics departments were looking to use GPU-accelerated platform for computational projects.

“The major difficulty we face is that our calculations are non-linear, time-dependent and three-dimensional, so solving them is out of reach with pencil and paper, and the numerical computation requires large memory and fast speed – we are humbled from the start,” says Barenghi.

“We did some investigations and the IBM POWER9 system was the best technology for our work – in trial runs we got a ‘speed up factor’ in the order of 10x magnitude, so the decision was easily made.”

In terms of the technical aspects, the AC922 makes use of IBM’s new POWER9 processor with a multitude of modern connectivity capabilities, which improves data movement by up to 5.6 times over the PCIe Gen 3 ‘buses’ found in x86 servers.

OCF managing director Julian Fielden says IBM Power Systems are unlocking new potential for accelerated computing given they deliver the only architecture enabling NVLink between CPUs and GPUs.

“Modern AI, HPC and Analytics workloads are driving an ever-growing set of data intensive challenges,” says Fielden.

“These challenges can only be met with accelerated infrastructure, such as IBM’s POWER9. In such a highly competitive field as academic research, providing superior HPC services to compute large quantities of data quickly, can help to attract world-class researchers, as well as grants and funding.”

by LDeX Group | Mar 12, 2018 | Blog, Press

The cloud computing arm of Alibaba Group has launched eight products for the European market at the Mobile World Congress taking place in Barcelona.

For the first time Alibaba Cloud’s advanced big data and AI capabilities enabled by super computing power will be made available in Europe.

Alibaba Cloud asserts these new products will meet the surging demand for powerful and reliable cloud computing services as well as advanced AI solutions among European enterprises to support them to capture opportunities in the digital transformation era.

The new products targeted at European enterprises fall into a number of inter-related categories.

Alibaba is launching three products in relation to data technology and AI, including Image Search solutions that allow users to search for information online and offline using images, Intelligent Services Robot, which is a chatbot for business, and Dataphin, a data engine designed to cope with cross industry big data ddevelopement, management and application needs.

And with infrastructure and security crucial as the foundation for enterprises undertaking cloud migration, Alibaba Cloud is launching ECS Baremetal Instance, a new high performance computing solution of ECS that combines the strengths of virtualised systems and bare metal servers. Alibaba Cloud asserts that when connected as a supercomputer, ECS Baremetal becomes a Super Computing Cluster that will reduce network latency to the level of micro-seconds while offering elasticity and supercomputing capabilities.

The company is also introducing its next generation Cloud Enterprise Network (CEN) and Vulnerability Discovery Service, a high-performance security solution.

After a successful three year track record in China, Alibaba Cloud is launching the international version of Apsara Stack for European enterprises, a scalable and hybrid cloud services platform.

“Alibaba Cloud wants to be an enabler for technology innovation in Europe helping enterprises do business,” says Alibaba Cloud Europe general manager Yeming Wang.

“The Mobile World Congress in Barcelona is a great opportunity for us to refresh our European strategy and consider how we can make an increasing contribution to the digital transformation of enterprises in this market from different sectors with our offerings and expertise.”

According to Alibaba Cloud, the data intelligence solutions have many successful stories both in China and around the world. Image Search is widely applied in a number of scenarios in China including New Retail, a concept that seamlessly converges online and offline retail.

The intelligent service robot served more than 40 million customers in a single day during last year’s 11.11 Global Shopping Festival.

Dataphin, the system for intelligent data creation and management, is now managing 95 percent of the entire Alibaba Group’s data and driving all types of data intelligence innovations within the group’s business ecosystem from retail to finance, logistics, transport and health.

“The decision making around how a business is to operate in the digital age is increasingly a strategic one. To meet their changing needs, we are able to leverage our practical experience of digital transformation and successes accumulated in China to the benefit of European enterprises,” Wang says,

“These advanced solutions will enable organisations in a wide range of sectors and will bring them true connectivity, both locally and globally. For Alibaba Cloud, this is the true meaning of inclusive technology.”

Alibaba Cloud has affirmed it is committed to investing in cloud computing services and digital infrastructure in Europe.

The company launched its first availability zone in Frankfurt, Germany in late 2016 and recently commenced operation of the second availability zone in the same region.

Alibaba Cloud has already established a number of partners in Europe, including Vodafone in Germany, the Met Office which is the national meteorological service for the UK, and Station F, an innovation hub in France.